Guest post by Michael Betancourt.

I just finished Box, Hunter, and Hunter (Statistics for Experimenters) and I cannot praise it enough. There were multiple passages where I literally giggled. In fact I may have been a bit too enthusiastic about tagging quotes beyond “all models are wrong but some are useful” that I can’t share them all.

I wish someone had shared this with me when I was first learning statistics instead of the usual statistics textbooks that treat model development as an irrelevant detail. So many of the elements that make this book are extremely relevant to statistics today. Some examples:

- The perspective of learning from data only through the lens of the statistical model. The emphasis on sequential modeling, using previous fits to direct better models, and sequential experiments, using past fits to direct better targeted experiments.

- The fixation on checking model assumptions, especially with interpretable visual diagnostics that capture not only residuals but also meaningful scales of deviation. Proto visual predictive checks as I use them today.

- The distinction between empirical models and mechanistic models, and the treatment of empirical linear models as Taylor expansions of mechanistic models with covariates as _deviations_ around some nominal value. Those who have taken my course know how important I think this is.

- The emphasis that every model, even mechanistic models, are approximations and should be treated as such.

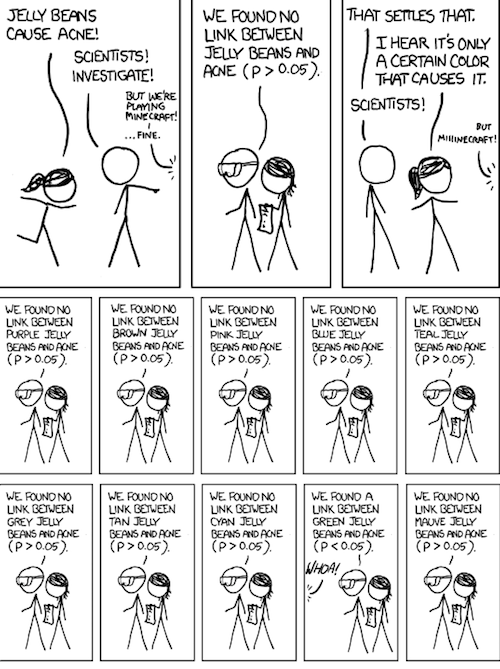

- The reframing of frequentist statistical tests as measures of signal to noise ratios.

- The importance of process drift and autocorrelation in data when experimental configurations are not or cannot be arbitrarily randomized.

- The diversity of examples and exercises using real data from real applications with detailed contexts, including units everywhere.

Really the only reason why I wouldn’t recommend this as an absolute must read is that the focus on linear models and use of frequentist methods does limit the relevance of the text to contemporary Bayesian applications a bit.

Texts like these make me even more frustrated by the desire to frame movements like data science as revolutions that give people the justification to ignore the accumulated knowledge of applied statisticians.

Academic statistics has no doubt largely withdrawn into theory with increasingly smaller overlap with applications, but there is so much relevant wisdom in older applied statistics texts like these that doesn’t need to be rediscovered just reframed in a contemporary context.

Oh, I forgot perhaps the best part! BHH continuously emphasizes the importance of working with domain experts in the design and through the entire analysis with lots of anecdotal examples demonstrating how powerful that collaboration can be.

I felt so much less alone every time they talked about experimental designs not being implemented properly andthe subtle effects that can have in the data, and serious effects in the resulting inferences, if not taken into account.

Michael Betancourt, PhD, Applied Statistician – long story short, I am a once and future physicist currently masquerading as a statistician in order to expose the secrets of inference that statisticians have long kept from scientists. More seriously, my research focuses on the development of robust statistical workflows, computational tools, and pedagogical resources that bridge statistical theory and practice and enable scientists to make the most out of their data.

Website: betanalpha

Patreon: Michael Betancourt

Related: Statistics for Experimenters, Second Edition – Statistics for Experimenters in Spanish – Statistics for Experimenters Review – Correlation is Not Causation